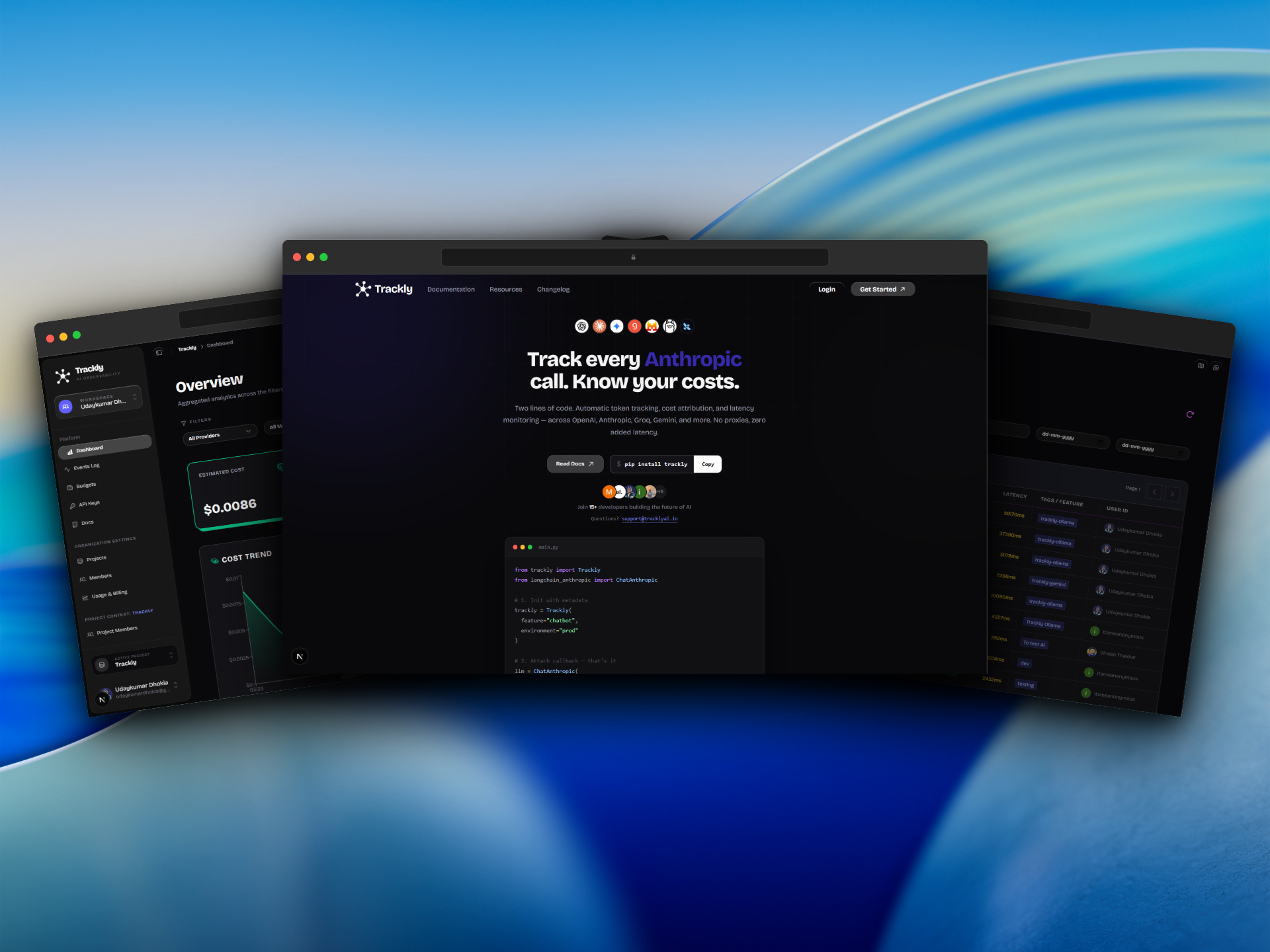

Trackly

AI Decision Engine for production AI systems

Visit Live ProjectThe Problem

Teams building AI products had no visibility into how their LLM-powered systems performed in production. Debugging agent failures, understanding cost breakdowns across providers like OpenAI, Anthropic, and Gemini, and comparing model efficiency required manual, ad-hoc processes. There was no unified platform to surface actionable insights from AI execution traces.

The Solution

Built Trackly — a full-stack AI observability platform with an SDK that plugs into any LLM provider. The system auto-ingests execution traces, computes real-time costs using live provider pricing, detects critical paths in agent workflows, and surfaces plain-English insights. Features include run comparison with output diffs, "What-If" model swap analysis, feature-level cost attribution, and smart budget alerts. Supports OpenAI, Anthropic, Gemini, Groq, Ollama, Mistral, and more.

The Impact

- ✓Supports 10+ LLM providers with zero-config SDK integration

- ✓Auto Insights Engine surfaces findings without manual analysis

- ✓Real-time cost intelligence with live model pricing

- ✓Critical path detection for agent debugging

- ✓Tiered pricing from free to $99/mo for enterprise scale

Ready to Start?

Let's build something extraordinary together

Have an idea, a product to scale, or a workflow to automate? I'd love to hear about it. Let's turn your vision into reality.